Overview

Mise-en-Lens is a Next.js web app that teaches film theory through a user's own taste. Instead of

starting from abstract concepts, the deployed app starts from a Letterboxd Top 4 the user already

loves. They input a username or profile URL, and the system pulls posters and titles automatically

before generating personalized analysis that connects those specific films to broader concepts in

cinematography, directing, social context, and genre.

The project was built as my capstone for COMP 395: Artificial Intelligence and Learning Technologies

at Occidental College, taught by Prof. Joel Anderson Walsh. The course asks students to design,

prototype, and evaluate a novel AI-driven learning technology grounded in educational theory.

Mise-en-Lens was my answer to a specific gap: most people consume film passively, and the tools that

exist for deeper engagement assume prior knowledge that casual viewers do not have.

Live Product Experience

The live landing page frames the project as Film Literacy Through Taste. It keeps the

interaction lightweight: paste a Letterboxd username or profile URL, pull the Top 4, learn the

patterns, and then continue into a separate quiz page built around the same films.

Pull the Top 4

- Users can paste a username or profile link and let the app fetch posters and titles automatically.

- The initial screen is intentionally simple so the experience starts from taste, not setup friction.

Learn the Patterns

- The lesson surfaces genre, values, artistic form, and social context in beginner-friendly language.

- The output stays tied to the user's actual Top 4 instead of a generic film-theory overview.

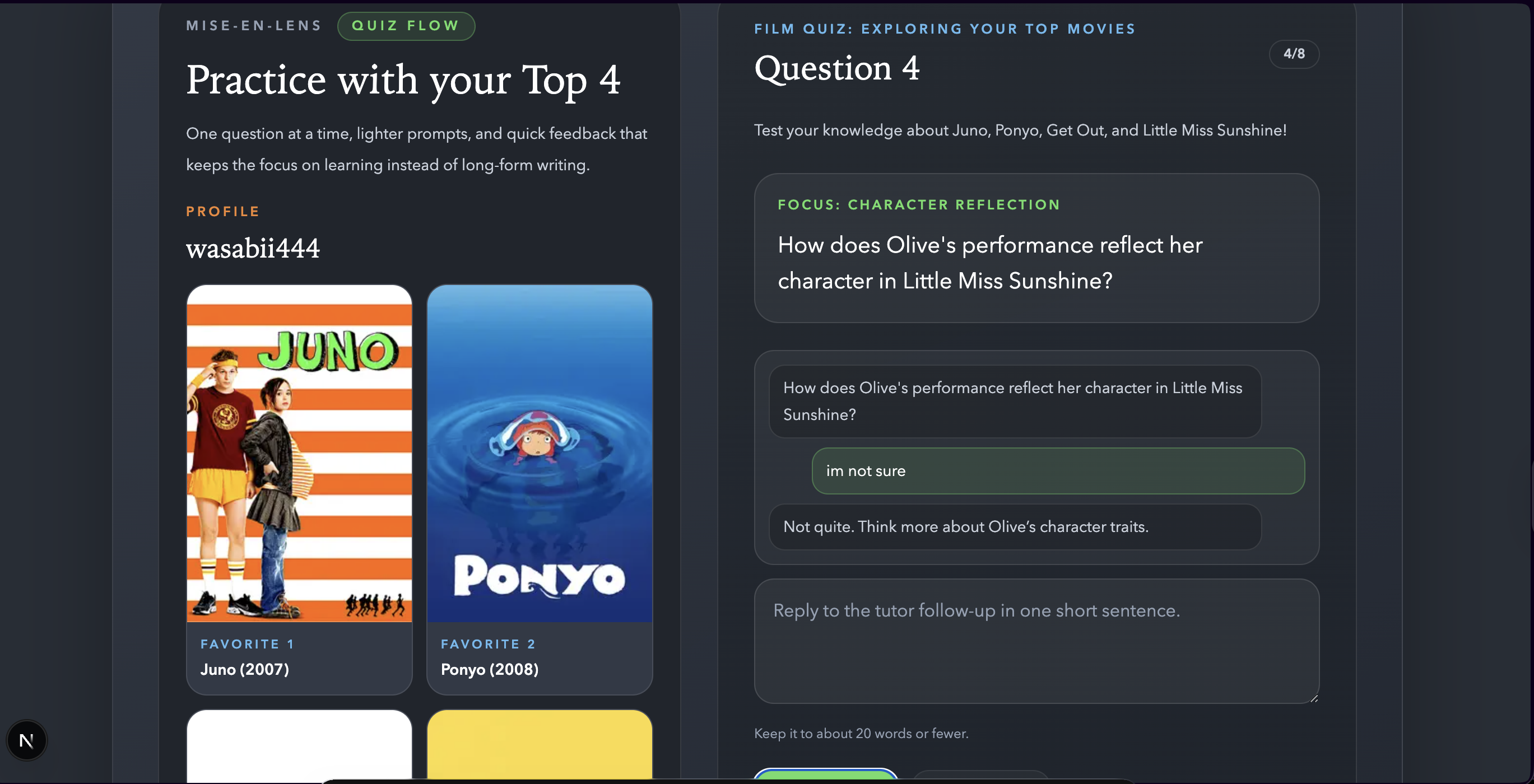

Practice with Quiz

- Quiz mode now lives on a separate page, which keeps the lesson layout focused and easier to scan.

- Questions are delivered one at a time so the feedback loop feels more conversational and less overwhelming.

Built Around Your Design

- Personalized analysis is linked to the user's actual Top 4.

- Artistic elements, social context, and recommendations all point back to educational redirection.

- The empty state invites the user to paste a profile and immediately populate the poster panel.

The Problem

Media literacy is declining. A 2024 study from Media Literacy Now found that barely 38% of

participants were taught how to analyze media messaging in high school. At the same time, the 2024

Edelman Trust Barometer found that 54% of respondents felt technology was evolving too quickly to

interact with critically. Film is one of the most accessible entry points into critical media

analysis, but most people are never given the tools to engage with it that way.

My target learner is someone who watches films regularly but has no formal background in film theory

or media studies. They are curious but not academic. They are more likely to have a Letterboxd

account than to have read Bazin.

Learning Theory

The design is grounded in two principles from educational theory.

Transfer

- Rather than teaching film concepts in the abstract, the app anchors every concept to a film

the user already has an emotional connection to.

- If someone lists Parasite in their Top 4, they already understand tension, spatial metaphor,

and class dynamics on a felt level. The app makes that felt understanding explicit and

transferable to films they have not yet seen.

Immediate Feedback

- In quiz mode, the app responds to every answer in real time, not just marking it right or

wrong but explaining the concept, connecting it back to the user's specific film, and asking a

follow-up question that scaffolds toward deeper understanding.

- If a user responds with "I don't know," the system simplifies rather than repeats.

How It Works

Users enter a Letterboxd username or paste a profile URL. The app scrapes their Top 4 films and

displays them with posters, then routes them into either the lesson or the quiz flow.

The deployed interface is designed to feel more like a guided lesson than a static portfolio demo.

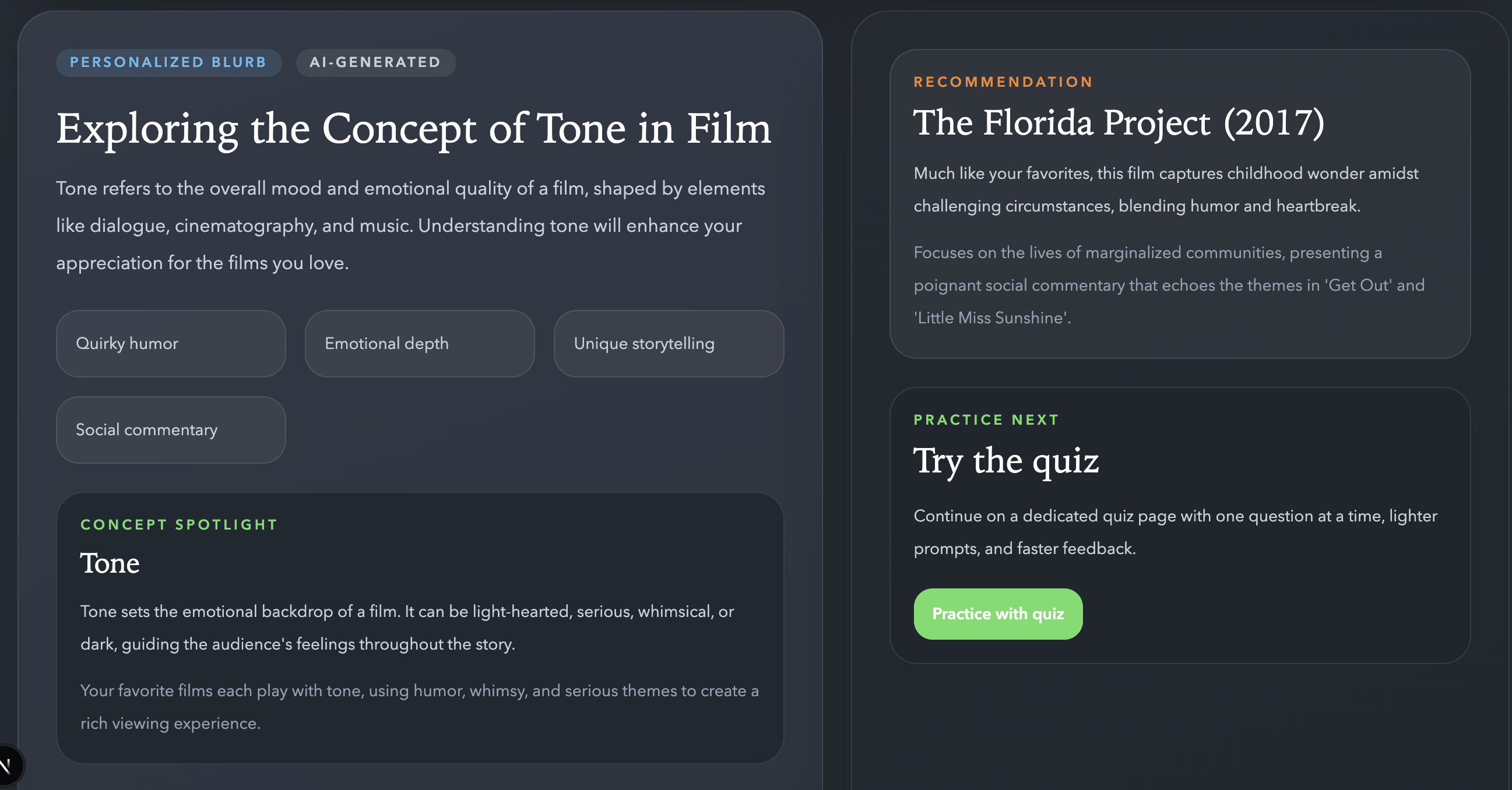

Blurb Mode

- Generates a personalized lesson covering thematic patterns across the four films.

- Explains one film concept in plain language and connects it to a specific film in the

user's list.

- Provides societal and historical context behind the films.

- Offers a recommendation with an explanation of why it fits the user's taste.

Quiz Mode

- Generates three questions about the user's specific films. The first is

recognition-based. The second asks for a short guided interpretation. The third is a

transfer question connecting what they just learned to a different film in their Top 4.

- Each answer gets real-time feedback. If an answer is partially right, the system

acknowledges what they got and guides them toward the rest.

- If a user is stuck, it breaks the question down rather than repeating it.

User Testing

User testing is planned with professors in the Media Arts and Culture department at Occidental

College and with college-aged Letterboxd users. The testing plan focuses on three areas: whether

the output feels insightful and responsive to the user's specific films, whether the interface is

easy to navigate, and whether the recommendations feel genuinely connected to their taste rather

than generic.

What I Learned

Working within the constraints of a course rubric while building something I actually wanted to

exist pushed me to think more carefully about the gap between a technically functional system and a

pedagogically sound one. The first version of the quiz was technically correct but pedagogically

frustrating. Questions were too open-ended, feedback was too vague, and users could not tell when

they had answered well enough to move on.

The pivot toward scaffolded, recognition-first questions came directly from Prof. Walsh's feedback

and from watching early testers disengage. The app works better now, but the deeper lesson is that

LLM output quality and learning experience quality are not the same thing, and optimizing for one

does not automatically improve the other.