Overview

ItEra Studio is an evolving reference discovery system for artists. It interprets creative intent, routes

queries across real art and image databases, and returns credited references with source links and

license-aware filtering. The project started in my freshman year as a Java-based quiz program called

DoodleQuest that generated 20 static art prompts. It has gone through several complete transformations

since then, each one driven by research rather than assumption.

Where It Started

I built the first version of DoodleQuest in COMP181. It was a Java survey that mapped user responses to a

fixed set of 20 prompts. The core idea was right: artists need targeted, growth-oriented challenges rather

than generic ones. But the execution was too rigid and the tool was doing all the thinking for the user.

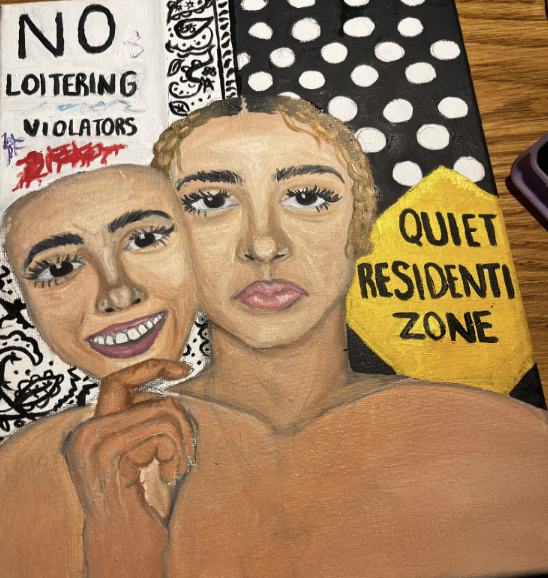

Sophomore year I ran an independent study with Professor Sanchez specifically focused on AI prompting and

illustration. Over 14 weeks I created six bodies of work responding to AI-generated prompts across gesture

drawing, color theory, self-expression, traditional media, narrative, and mixed media. I was not just

making art. I was evaluating how AI interacted with creative process, and documenting what worked, what

did not, and why.

One of the main findings was that AI prompts skew Eurocentric by default. When I asked for gesture drawing

prompts, the suggestions leaned toward yoga poses. Color theory suggestions reflected Western associations

with "warmth" and "calm" without cultural nuance. These are not neutral failures. They are baked-in

biases. I developed a five-criteria rubric for evaluating prompts on creativity, flexibility, clarity,

cultural accessibility, and engagement, and used it to assess how AI performed across different creative

contexts.

The clearest finding: a good prompt does not tell you what to make. It helps you ask better questions.

User Research and the Pivot

Before building further, I needed to know whether artists actually wanted AI critique tools. I designed a

structured user study and distributed it through personal networks and art-focused subreddits and Discord

communities. The study included a Likert scale section where four participants tested existing AI critique

tools and evaluated them across multiple dimensions.

Key findings from the private survey (n=4):

- 75% agreed the feedback was helpful for improving their work.

- 75% agreed the feedback felt personalized to their artwork.

- Only 50% agreed the feedback gave clear, actionable next steps.

- 50% disagreed that AI feedback made them feel more confident.

- Overall satisfaction averaged 3.75 out of 5 stars.

The open-ended responses were more telling. One participant wrote that they could not tell what the AI was

referring to when it suggested changes to proportions. Another wanted exercises that fit their specific

style, not generic corrections. One said they would take human critique again, but only from someone who

understood what they needed and could apply it with confidence in mind.

The public response from Reddit and Discord was more pointed. Responses were overwhelmingly skeptical of

AI critique tools, with concerns around ethics, trust, and the perception that AI cannot genuinely

understand visual intention. One artist captured what would become the direction of ItEra:

"The only real purpose I can see AI being useful for as a tool is an AI that finds specific real reference

images based on a prompt. Every artist struggles to find the perfect reference image at specific

perspective angles and often spends hours searching instead of doing art."

That response was not an outlier. It was a pattern. Artists did not want AI to evaluate their work. They

wanted help finding real work to study.

The pivot was direct: away from critique, toward reference discovery. The role of AI shifted from judge

to navigator.

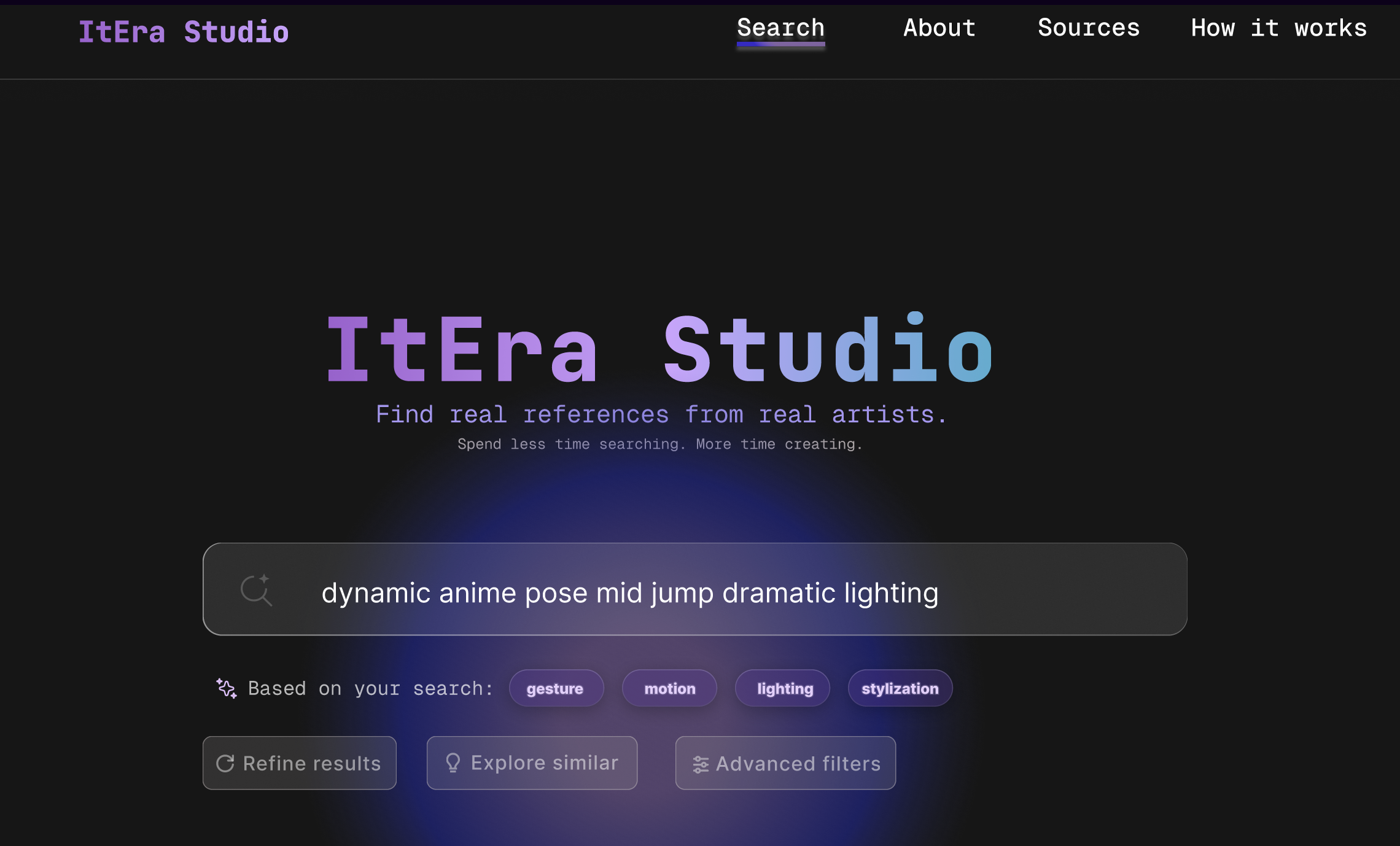

What ItEra Does

ItEra is designed around one idea: connect artists to real references from real artists, with attribution

intact.

A user enters a natural language description of what they are trying to make. The system interprets that

intent, expands it into related visual concepts and terms, and routes the query across multiple databases

simultaneously. Results come back with artist credit, source link, and license information so the user

knows exactly what they are looking at and where it came from.

The search interface shows the interpreted query alongside AI-expanded tags so the user can see how their

intent was understood and adjust it. Filters for refining and exploring similar results keep the user in

control of the direction.

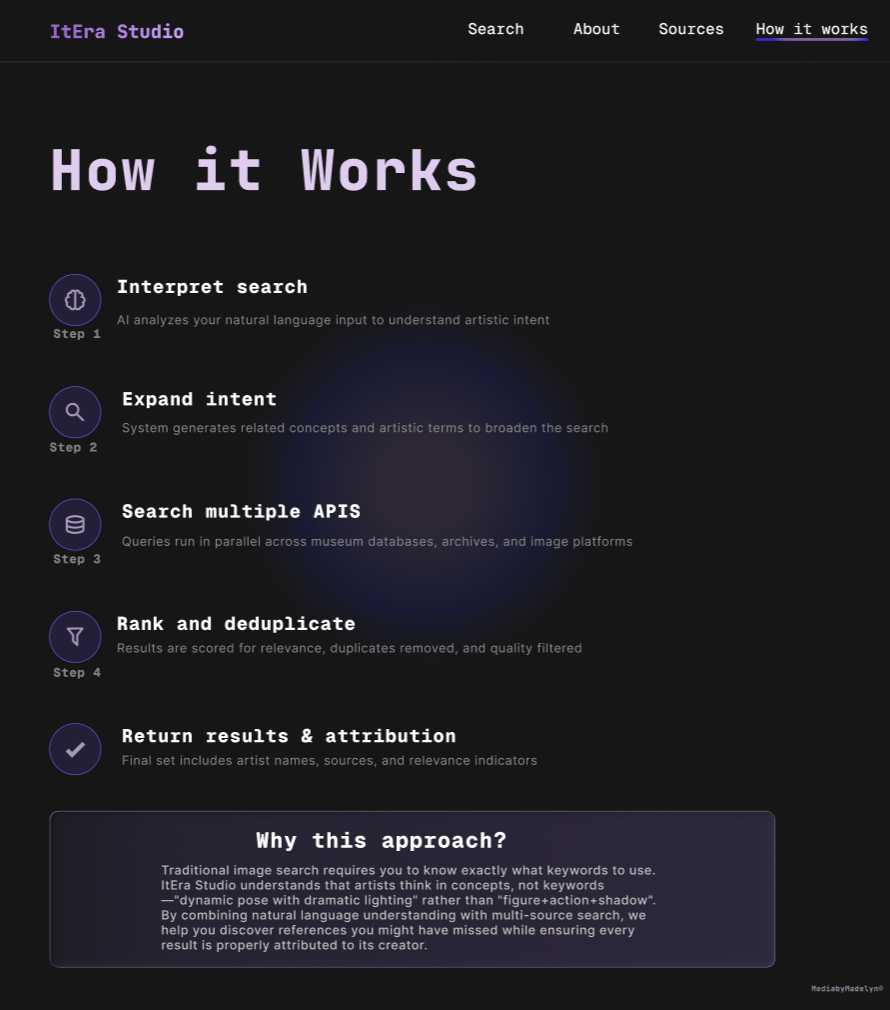

Step 1: Interpret Search

- The AI analyzes natural language input to understand artistic intent.

- Conceptual language, mood, and medium are parsed into structured terms before any query is run.

Step 2: Expand Intent

- The system generates related visual concepts and search terms from the interpreted intent.

- The query is widened without overriding the user's direction, surfacing adjacent ideas they may

not have named.

Step 3: Surface Results

- References are returned from verified databases with artist credit, source link, and license

information attached.

- Duplicates across sources are removed. Results are ranked by relevance, metadata quality, and

source trust.

Reflection

This project has changed direction more than once because the research kept finding problems with the

previous direction. I am glad I ran the user study when I did. The Reddit response in particular was

direct enough to make the pivot obvious rather than uncertain.

The most important thing I learned is that AI in creative tools works best as a way to expand what

someone is already doing, not as a replacement for their judgment. Every version of ItEra that tried to

evaluate or instruct the user felt wrong. Every version that helped them find and study real work felt

right.

I also documented the Eurocentric bias in AI prompt generation earlier than I expected to. That finding

shaped the research rubric and, eventually, shaped the sourcing priorities for ItEra. Expanding to

non-Western datasets is not an afterthought. It is a founding concern of the project.